|---Module:text|Size:Small---| Artificial Intelligence has rapidly moved from experimental research into the core of modern digital products and services. Large language models, recommendation systems, computer vision and autonomous decision-making are now embedded in customer-facing applications, internal business processes and critical infrastructure. As adoption grows, so do the potential consequences of security failures.

Unlike traditional software, AI systems introduce new vulnerabilities such as prompt injection, jailbreaking, model manipulation and data leakage. These risks are amplified by the probabilistic nature of AI models, making their behaviour harder to predict and secure using conventional practices.

While modern software development has embraced Shift-Left security through DevSecOps, AI development often follows data-driven and experimental methodologies where security receives less attention. Recent incidents have shown how this gap can expose organisations to serious risks.

|---Module:text|Size:Small---|

Secure delivery of AI solutions

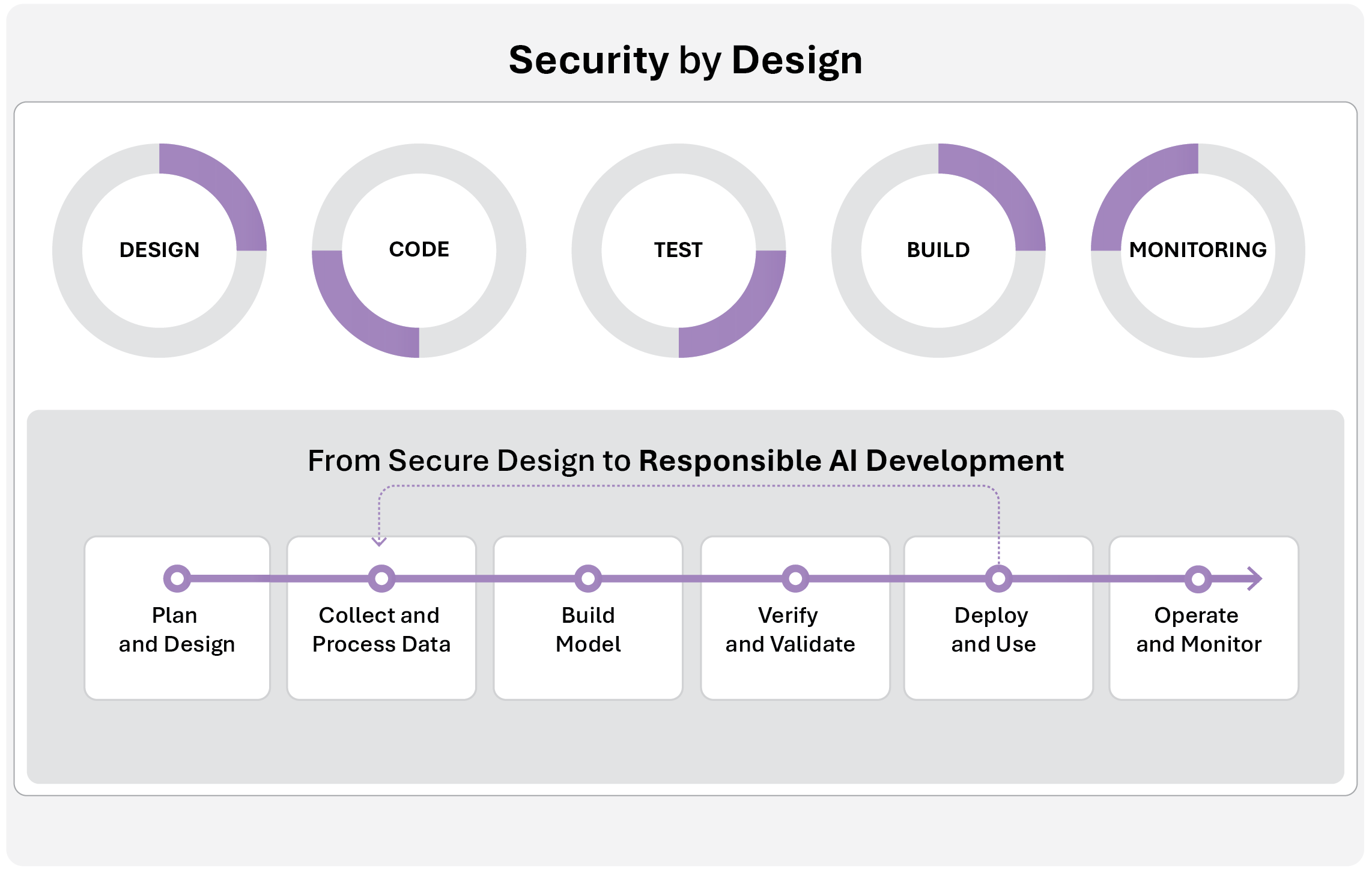

Traditional secure software development typically follows five phases: solution design, coding, testing, deployment, and monitoring. While effective for conventional applications, it does not fully address the unique characteristics and risks of AI systems.

To address this gap, Celfocus proposes an approach to secure AI development that complements the traditional lifecycle, adding practices specifically designed for AI-based solutions.

|---Module:image|Size:Small---|

|---Module:text|Size:Small---|

|---Module:text|Size:Small---|

AI Risks and Threats

AI systems face a wide range of security risks, including model poisoning, adversarial inputs, training-data reconstruction, prompt injection, and jailbreaking. As AI becomes more integrated into real-world applications, mitigating these threats is increasingly critical. Early threat modelling during the planning phase is essential to anticipate and address risks proactively. Standards such as MITRE ATLAS and OWASP Top 10 for LLMs provide guidance for this process.

Jailbreaking is a growing concern, exploiting models’ competing objectives and gaps in generalisation. Techniques like prefix injection or suppression of refusal behaviour can trick models into bypassing safety mechanisms, sometimes combining multiple methods to defeat guardrails and gain unintended outputs.

How can AI risks be mitigated? Defence strategies

Through sophisticated guardrail models, intent or anomaly detection, and adversarial fine-tuning. The framework encourages a proactive security approach, embedding protections from the start of AI development. Despite these measures, no defence is perfect, so assigning critical responsibilities to AI systems should be done with caution.

Celfocus advocates a proactive approach using AI-driven validation and stress-testing to simulate attacks and detect vulnerabilities early, strengthening AI security before deployment.